GDPR compliance is a must for SMEs using AI tools. Developing a robust AI strategy is the first step toward alignment. Here's what you need to know:

Take action now: SMEs can reduce risks, meet legal requirements, and use AI responsibly by following these steps.

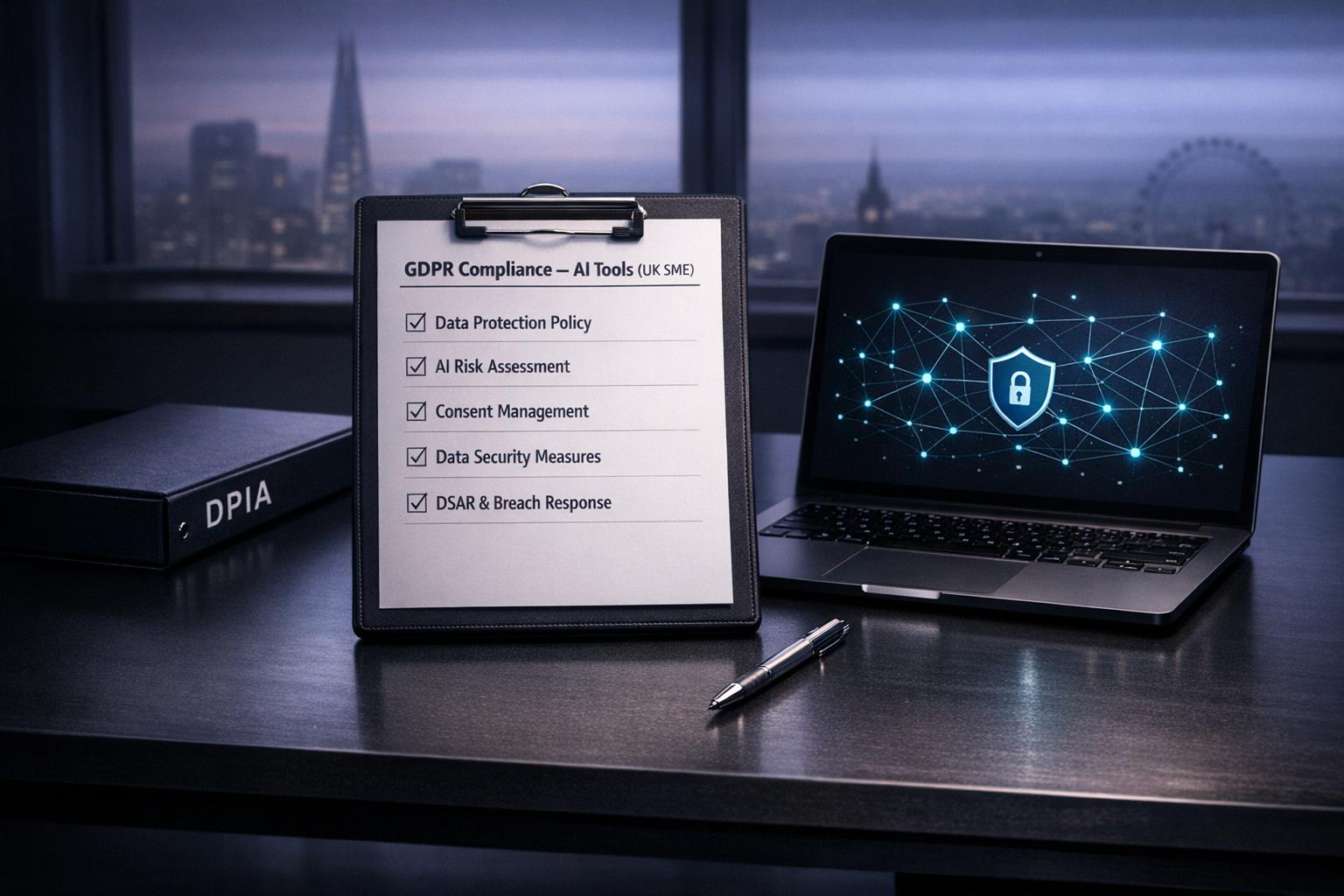

6 Essential Steps for GDPR-Compliant AI Implementation in SMEs

The first step in achieving GDPR compliance is understanding which AI tools your business uses and tracking how personal data flows through them. Many SMEs encounter "Shadow AI" - tools employees use without IT's formal approval. These can include AI code assistants, smart compose features, or generative design tools. As iSyncEvolution highlights:

"Shadow AI poses a significant compliance risk."

To address this, conduct a thorough survey across all departments to identify AI tools and document their usage. For each tool, create a detailed inventory that includes the tool's name, vendor, version, data inputs/outputs, business purpose, and the origins of the data - how it was collected and who receives it. It's crucial to differentiate between the research and development phase (training and model selection) and the deployment phase (actual use), as GDPR often requires different legal bases for these activities.

Additionally, document all data transfers and storage locations, including intermediate files and movements between internal systems or third-party cloud providers. Machine learning frameworks can be complex, so meticulous documentation is essential. Implementing middleware to sanitise or mask personal data before it reaches your AI systems can help reduce exposure risks.

Once you’ve mapped your AI tools and data flows, evaluate whether every piece of data collected is absolutely necessary as part of your AI feasibility studies.

The GDPR’s data minimisation principle requires that personal data be limited to what is adequate and relevant for its purpose. The Information Commissioner’s Office (ICO) explains:

"The data minimisation principle does not mean either 'process no personal data' or 'if we process more, we're going to break the law'. The key is that you only process the personal data you need for your purpose".

For each data point, assess its necessity for the AI model's performance. For instance, does the model truly need a full address, or would a postcode suffice? Ensure that every data element has a clear, documented purpose. Be cautious of purpose limitation: data collected for one reason, such as order fulfilment, cannot be repurposed for training AI models for marketing insights without establishing a new legal basis or obtaining fresh consent.

A good example comes from a Belfast-based accounting firm in 2026. They subscribed to ChatGPT Enterprise for client work and signed a Data Processing Agreement (DPA) with OpenAI. This ensured client data wasn’t used for model training, and they documented their lawful basis as "legitimate interest" to provide efficient service. If you rely on legitimate interests rather than consent, conduct a Legitimate Interest Assessment (LIA) to justify the processing, confirm its necessity, and ensure it doesn’t override individual rights.

Just as mapping and purpose assessments are vital, so too are practices for ensuring data accuracy and enforcing retention limits. GDPR requires personal data to remain accurate and be deleted when no longer necessary. For AI systems, this means establishing clear retention schedules and justifying any extended data storage. Training data should be removed once it becomes outdated and no longer improves model performance.

Automated data validation and audit trails can help track modifications and ensure accuracy. Standardise data deletion processes when retention limits are reached, and assign responsibility for this task - such as appointing an Information Asset Owner in each department.

If technical constraints prevent permanent deletion, ensure the data is securely stored, access is restricted, or the data is anonymised. For AI models like Support Vector Machines (SVMs), which may embed training data in their logic, you must ensure the ability to retrieve or erase this data when someone exercises their "right to be forgotten". The ICO also advises that while probability-based AI predictions aren’t inherently "inaccurate", consistently poor results for certain groups could signal problems with the underlying data or feature selection that need addressing.

Your privacy policy needs to clearly outline how your AI processes personal data. Use straightforward, accessible language to explain key details, such as:

Make sure to outline the lawful basis for each phase of your AI process. If you collect data directly from individuals, provide this information at the time of collection. If the data comes from other sources, share these details within one month. Additionally, inform individuals of their right to withdraw consent (when consent is the lawful basis) and explain how complaints can be submitted to the ICO.

Kate Bennett, CEO of Disruptive LIVE, highlights the risks:

"The ICO does not distinguish between a 5,000-person bank and a 12-person accountancy practice. If a member of your team pastes client data into a free ChatGPT account, you are the data controller and you carry the liability."

With 71% of UK employees using unapproved AI tools at work as of October 2025, it’s essential to categorise data clearly:

Communicate these categories in your privacy policy and review your AI policies every six months to keep up with changes in AI tools and data handling practices. These steps are crucial for securing informed consent in your AI processes.

Clear privacy policies are just the first step. When consent is your lawful basis for processing, you must go further to ensure it’s informed. Present consent requests separately from general terms and conditions, and include:

The UK GDPR specifies:

"The request for consent shall be presented in a manner which is clearly distinguishable from the other matters, in an intelligible and easily accessible form, using clear and plain language." [17,19]

Consent must involve a clear affirmative action, such as ticking a box or clicking a button. Pre-ticked boxes, silence, or inactivity do not qualify. For processing special category data, like health or biometric information, explicit consent through an express statement is required [2,19].

To make the process seamless, consider using just-in-time notices - brief messages that appear when data is entered - to provide relevant information without disrupting the user experience. Keep a detailed audit trail of consent, including who gave it, when, what information was provided, and how the consent was given. Make withdrawing consent as easy as giving it, for example, through an online form. If there’s uncertainty about the validity of consent, the ICO advises refreshing it every two years. These practices ensure individuals maintain control over their data while aligning with GDPR requirements. For more information on how these regulations impact your business, see our AI and automation FAQs.

Once you’ve secured informed consent, it’s equally important to explain how AI-driven decisions are made. If your AI system makes solely automated decisions with legal or significant effects on individuals, GDPR mandates you to provide meaningful explanations. The Information Commissioner’s Office (ICO) stresses:

"It is unlikely to be considered transparent if you are not open with people about how and why an AI-assisted decision about them was made."

Your explanation should cover:

Simplify technical details into clear explanations so individuals can understand and evaluate the decision [22,23]. Use a layered approach: start with a high-level summary, followed by additional technical details (like feature importance or correlations) for those who want more depth. Counterfactual explanations can also help - e.g., "If your credit score was 50 points higher, the loan would have been approved".

Inform users proactively about AI-driven decisions, including when and why they are applied. If these decisions are communicated online, provide direct contact details or links to staff members who can assist further. This transparency not only ensures GDPR compliance but also fosters trust with your customers.

Effectively managing risks is a cornerstone of ensuring that AI systems comply with GDPR. A well-executed Data Protection Impact Assessment (DPIA) plays a key role in achieving this.

A DPIA is mandatory when your AI system poses a high risk to individuals' rights and freedoms. According to UK GDPR Article 35, this includes activities like systematic profiling with significant legal consequences, large-scale processing of sensitive data (such as health or biometric data), and consistent monitoring of publicly accessible spaces.

The Information Commissioner's Office (ICO) views AI, machine learning, and deep learning as technologies that demand careful examination. If your AI use case involves other risk factors - like processing data not directly provided by individuals, combining datasets from multiple sources, or targeting vulnerable groups such as children - a DPIA becomes a legal requirement. Ignoring this obligation could lead to steep penalties, with fines reaching up to £8.7 million or 2% of your annual global turnover, whichever is greater.

A DPIA should be initiated early in your project’s development. This is a critical step in your AI readiness assessment to ensure compliance from the start. Start by mapping data flows and evaluating the necessity and proportionality of your AI system. Use tools like a likelihood-severity matrix to assess risks, particularly those related to allocative or representational harms. Then, implement appropriate technical and organisational safeguards. Document any residual risks and, if these remain high, involve your Data Protection Officer (DPO) for review. It’s crucial to confirm that your AI system is both necessary and minimally intrusive, while also establishing a lawful basis for processing. If significant risks persist, you must consult the ICO before proceeding. Typically, the ICO provides feedback within six weeks.

Sarah Chen, Compliance & Data Protection Officer at UK Data Services, highlights the importance of this process:

"A DPIA is not just a box-ticking exercise - it's a strategic tool that helps organisations build privacy by design into their operations while demonstrating accountability to regulators."

By following this structured approach, you can align your DPIA with your overall GDPR compliance efforts.

Let’s consider a practical example: an SME using AI to analyse customer browsing behaviour for personalised email recommendations. In this case, a DPIA is necessary due to the systematic profiling involved.

The assessment might include documenting that the system collects clickstream data, purchase history, and email addresses, all stored securely on a cloud server with restricted access. Confirm that email consent has been obtained and that profiling supports your legitimate interest in targeted marketing. Evaluate potential risks, such as inadvertently targeting vulnerable individuals or reinforcing filter bubbles. To mitigate these risks, you could implement age verification to exclude minors, provide clear opt-out options, and schedule regular reviews to monitor for concept drift. Once the residual risk is deemed "low", ensure your DPO signs off on the assessment.

Staying GDPR compliant isn’t a one-and-done task - it’s an ongoing process that requires structured governance, regular monitoring, and consistent training. These measures build on earlier risk assessments, ensuring your organisation continues to meet GDPR standards.

Start by creating an AI Register that documents every AI tool your organisation uses, including features embedded in software like CRM or accounting systems. This also applies to specialized tools like AI-powered customer support platforms. For each tool, note its business purpose, the responsible supervisor, and the type of data it processes. This register acts as a central accountability record.

Complement this with a straightforward, one-page "Acceptable Use" policy. As The Ai Consultancy points out:

"A dense, 50-page document isn't compliance. A clear, one-page internal policy that staff actually understand is".

This policy should clearly outline responsibilities for data handling, ethical principles for AI use, and steps to take when unexpected AI outputs occur.

Incorporate the safeguards required under the Data (Use and Access) Act 2025, which mandates protections for significant automated decisions. These include the right to clear information, the ability to challenge decisions, and meaningful human intervention. Embedding these safeguards into your governance framework from the start ensures compliance with current regulations.

Regular monitoring is key to identifying risks early. Update your Data Protection Impact Assessments (DPIAs) whenever there are changes to the nature, scope, or risks of your data processing. A quarterly review of your AI Register and risk assessments - just one hour every three months - can help catch potential issues before they grow.

Pay attention to user behaviour and override patterns. If AI decisions are being frequently overridden, it may indicate that the model needs retraining or additional data.

To stay organised, create a centralised compliance repository. This should include your AI registers, data flow maps, DPIAs, and usage policies, all stored in one easily accessible location. This way, if the ICO requests documentation, you can provide it quickly. As the ICO advises:

"The ICO doesn't expect perfection. It expects evidence of thoughtful, risk‐based decision‐making".

Finally, confirm regularly that your AI systems align with data minimisation protocols.

Accountability for AI risks doesn’t rest solely with technical teams. Senior management and Data Protection Officers (DPOs) must also understand these risks, as the ICO underscores:

"You cannot delegate these issues to data scientists or engineering teams. Your senior management, including DPOs, are also accountable for understanding and addressing them appropriately".

Short, 30-minute walkthroughs of your AI usage policies can be more effective than lengthy training sessions. Focus on conversational, practical training tailored to relevant staff. Those responsible for reviewing AI outputs should receive specific guidance on providing "meaningful human intervention." This means they must have both the authority and the know-how to override AI decisions when necessary, rather than simply rubber-stamping them.

Vendor management is another critical element. Make sure your AI vendors have signed Data Processing Agreements (DPAs) and can provide security documentation when required.

Navigating GDPR compliance in the context of AI requires a methodical and well-documented approach. Start by auditing all AI tools and mapping your data flows. Establish a lawful basis for processing data and maintain an AI Register to ensure ongoing oversight. For high-risk AI systems - like those used in recruitment, credit scoring, or large-scale profiling - conduct a Data Protection Impact Assessment (DPIA). Emphasise data minimisation by collecting only what’s absolutely necessary, and use techniques like pseudonymisation to safeguard individual privacy. Privacy notices should also be updated to transparently disclose AI usage and explain how automated decisions are made.

Regular reviews are essential. Schedule quarterly evaluations to monitor changes in vendor terms and update your risk assessments. Provide your team with practical training on responsible AI practices, empowering them to assess and, when needed, override automated decisions. These actions form the foundation of a GDPR-compliant AI strategy.

The cost of non-compliance can be steep. With fines reaching up to €20 million or 4% of global turnover, and penalties for UK SMEs ranging from £8,000 to £80,000, investing approximately £3,000 annually in compliance measures is a prudent choice.

By following these steps, and seeking expert support when necessary, SMEs can ensure they remain compliant while leveraging AI responsibly.

Wingenious provides tailored support to help UK SMEs implement these measures effectively. Our AI Strategy Workshops are designed to identify AI solutions that align with your business goals and regulatory obligations. These workshops create a clear roadmap, balancing innovation with compliance, and cover essential steps such as data audits, DPIAs, and governance frameworks suited to your organisation's specific risk profile and scale.

We also offer AI Tools and Platforms Training to equip your team with the skills needed to use AI responsibly. Our training focuses on practical topics like data minimisation, acceptable use policies, and maintaining human oversight. Delivered in a conversational style, these sessions ensure your staff can grasp and apply the concepts without wading through dense legal jargon.

Through our consultancy services, Wingenious helps you implement ethical AI practices that safeguard personal data while enhancing operational efficiency and driving business growth.

Using AI doesn't automatically mean handling personal data under UK GDPR. Whether GDPR applies depends on whether personal data is processed during the AI system's development or deployment. If personal data isn't part of the process, GDPR regulations might not be relevant.

To keep customer data safe and prevent employees from using unauthorised "Shadow AI" tools, it's essential to set up a solid governance framework. This means having clear policies, proper oversight, and technical controls in place. Here's how you can tackle this issue effectively:

To take it a step further, you can classify tools based on how sensitive the data they handle is. For example:

Additionally, maintaining an AI Asset Register can help you track which tools are approved and ensure that only authorised ones are used for handling sensitive customer data. This structured approach not only reduces risks but also builds trust in how your organisation manages data.

Handling 'right to be forgotten' requests under GDPR presents a real challenge when it comes to AI models. While organisations are legally required to address these requests, completely removing personal data that's been integrated into a model's parameters is often not technically possible. In many cases, this could mean retraining or fine-tuning the model to ensure the data is excluded moving forward.

To navigate this, it's crucial for organisations to be upfront about these limitations. Establishing clear governance processes from the outset can help manage compliance while acknowledging the technical hurdles involved. Balancing these aspects is no small task, but early planning and transparency go a long way.

Our mission is to empower businesses with cutting-edge AI technologies that enhance performance, streamline operations, and drive growth. We believe in the transformative potential of AI and are dedicated to making it accessible to businesses of all sizes, across all industries.